Monday 26th of May, Dr. Nils Reiter, professor for Digital Humanities and Computational Linguistics, educated us on a topic that is very contemporary, not only in linguistics, but in almost every field in academia, and many outside of it: working and conducting research with Large Language Models like e.g. ChatGPT. More specifically, the discussion centered around a more practical problem in the research around this topic – the aspect of reproducibility when utilizing and analyzing LLMs, and if this is even possible in the first place. Luckily, Dr. Reiter answered this question right away with a yes, however only under very specific circumstances. In order to secure reproducibility, there are three important criteria that have to be fulfilled.

Firstly, one has to use a local LLM for the assigned task. Online models are being constantly fed more information, thus making them ever changing and evolving, making it impossible to assure reproducibility. Local models (stored on your device) however, are in a state of stasis, as there is no additional information being added and therefore no changes are being done to the model. LLMs are a computer program and thus are deterministic by nature. This, in theory, means that the same input should always produce the same output if no changes have been performed. Furthermore, this also touches upon our prevalent topic of Open Science, as the specific LLM and data has to be shared and accessible in order to ensure reproducibility.

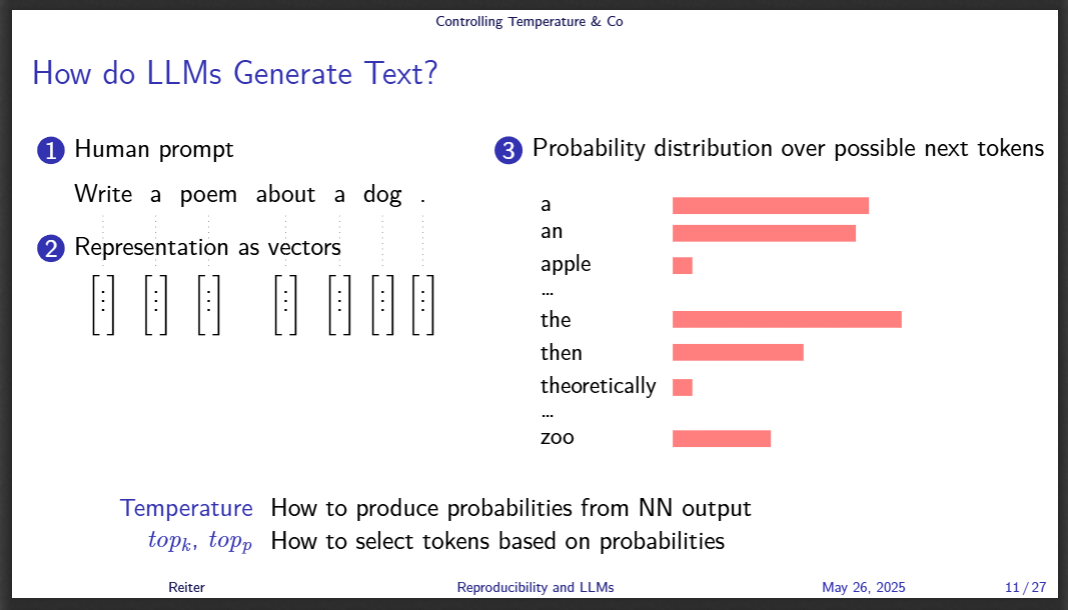

The second aspect a researcher has to consider when working with LLMs is the temperature and other settings of the models. These settings have to be recorded and they have to be made accessible, as the chance of using the exact same parameters by chance is nigh impossible. This raises the question however, what these terms mean in the first place and how it affects the working of the model. The answer is fairly simple: LLMs have certain parameters, that determine what words and outputs they produce. One setting for example is the temperature of the model, i.e. how rare the tokens (e.g. words) are that the model chooses for a prompt response. Therefore, the higher the temperature, the more “creative” the output. This is just one of the many settings involved in creating a unique LLM output, however. Change any of the settings and you will end up with different results. If you are interested in some more variables of LLM programming output, we recommend looking into top-p and top-k.

Lastly, while temperature and other variables affect the chance of specific tokens being chosen, there is one more important aspect influencing the output of LLMs: seed values. As mentioned before, computer systems and programs are deterministic by design, meaning that they are unable to produce true randomness, a concept that only occurs in nature. However, through a certain set of functions, a computer is able to imitate producing a random result. The output of these functions is governed by seeds. Some examples for this in action might be the rolling of a digital set of dice. In order to make the result change and appear to not follow a certain law, multiple seed values can be set, that influence the outcome. Some of those seed values might be based on mouse movement or memory allocation, both which are not completely random in and of themselves, but can produce the illusion of randomness. Therefore, when working with LLMs, the seed values have to be fixed, in order to avoid issues when reproducing the analysis due to the model’s inherent assigned randomness.

If all three of the prior mentioned criteria have been fulfilled, then nothing stands in the way of reproducible research with Large Language Models! If this topic interests you further, we recommend looking into Dr Reiter’s published works (Reiter, n.d.) as he continues his work with on operationalizing complex problems using LLMs. Additionally, you can access the slides of this talk from the OSF repository of ReproducibiliTea in the HumaniTeas: https://osf.io/ckyg7/files/osfstorage.